By Tim Anderson, CEO & CTO of DigitalGlue and creative.space, Murietta, California, USA.

We’ve all been there. It’s 2 a.m., and you’re deep in the edit for a high-stakes project. You have a perfect shot in your head: that low-angle, slow-motion clip of the CEO smiling, the one from the conference last year. You know it exists. But where is it? You fire up the Media Asset Management (MAM) system, your company’s expensive, supposedly state-of-the-art digital library. You try searching for “CEO_smile_conference.” Nothing. “CEO_keynote.” A thousand results, none of them right. You start clicking through folders: Events > 2023 > Q3 > CEO_Conference > Day_2 > Camera_A > Card_04. Your creative momentum grinds to a halt, replaced by the soul-crushing reality of digital archaeology.

The traditional MAM, for all its promises of organization and efficiency, has become a bottleneck. It’s a relic of a pre-AI era, a digital filing cabinet in a world that demands a search engine with a creative soul. But what if the solution to this problem wasn’t a better folder structure or a more complex tagging system? What if the solution were to eliminate the interface as we know it? What if, instead of navigating a maze of menus and filters, you could simply ask for what you want, in your own words, and get it instantly?

The traditional MAM is disappearing. It’s not being replaced by a newer, shinier version of itself, but by something fundamentally different: a single, powerful prompt box. This isn’t science fiction. It’s the inevitable convergence of three powerful forces: hyper-detailed metadata, advanced AI analysis, and the intuitive power of Large Language Models (LLMs). The future of media management isn’t a better filing cabinet; it’s a conversation with your content.

The Slow, Painful Death of the Digital Filing Cabinet

The Slow, Painful Death of the Digital Filing Cabinet

Let’s be fair. The MAM was born out of necessity. As we moved from tapes to files, we needed a way to wrangle the ever-growing terabytes of video, audio, and images. MAMs gave us a centralized repository, version control, and a way to attach some basic information to our media. They were a massive leap forward from shelves of hard drives and spreadsheets.

But the world has changed, and the traditional MAM hasn’t kept up. Its limitations are now painfully obvious:

- Clunky, Rigid Interfaces: Most MAMs are built on a folder-and-file paradigm that forces creatives to think like IT administrators. They are not designed for the fluid, non-linear way that creative ideas are formed.

- The Metadata Bottleneck: The value of a MAM is directly proportional to the quality of its metadata, which has historically relied on manual, human tagging. This process is not only time-consuming and expensive but also notoriously inconsistent. The way one producer tags a clip (“somber,” “moody”) might be completely different from another’s (“dramatic,” “cinematic”).

- Search That Lacks Context: A keyword search can’t understand intent. It can’t differentiate between a “sad smile” and a “joyful smile.” It can’t understand abstract concepts like “a sense of hope” or “building tension.” It’s a literal, one-dimensional tool in a multi-dimensional creative world.

- Siloed Knowledge: The most valuable information about a media asset is often scattered. The technical data (codec, resolution, color space) lives in one place. The user-generated tags live in another. The project it was used in, the director’s notes, the client feedback—all are disconnected. The MAM holds the file, but the story behind the file is lost.

This friction isn’t just an annoyance; it’s a direct tax on creativity. Every minute spent searching for a clip is a minute not spent creating. Every great shot that lies undiscovered in the archive because of poor metadata is a missed opportunity.

The Successor: A Symphony of AI, Metadata, and Language

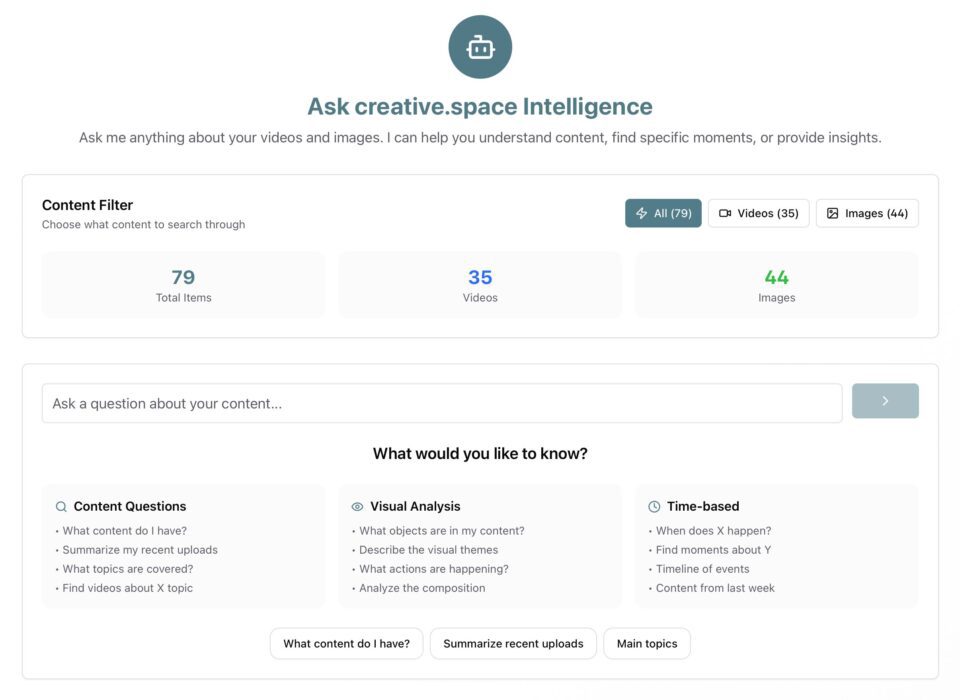

The replacement for the MAM is not a single piece of software but an intelligent ecosystem. At its heart is a simple, elegant idea: a single prompt box that serves as the universal interface to all your media. But behind that box lies a powerful and complex architecture.

Component 1: The All-Knowing Database

The foundation of this new paradigm is a database that knows everything about your media. It’s not just a list of files; it’s a rich, multi-layered tapestry of information, woven together from multiple sources:

- AI-Powered Analysis: This is the game-changer. Every asset in your library is automatically analyzed by a suite of AI models. This includes:

- Speech-to-text transcription: Every word spoken in every video is transcribed and time-coded.

- Object and scene recognition: The AI identifies objects, locations, and scenes (e.g., “a city street at night,” “a beach at sunrise”).

- Facial and emotion recognition: It knows who is on screen and can even analyze their emotional state (“optimistic,” “concerned,” “joyful”).

- Sentiment analysis: It understands the overall tone and mood of a scene.

- Action recognition: It can identify specific actions, like “running,” “shaking hands,” or “typing on a keyboard.”

- Deep Technical Metadata: This system automatically ingests the exhaustive technical data embedded in modern media files. Think of it as a supercharged combination of all the data you’d get from tools like MediaInfo, ExifTool, REDline, ARRI RAW Meta Extract, and the Blackmagic RAW Extraction Tool. This includes everything from the basics (codec, frame rate, resolution) to the granular details (lens type, aperture, ISO, focal length, color space, LUTs applied).

- User-Generated Context: All the human knowledge associated with your media is also ingested. This includes user-created tags, project names, folder structures, collections, editor’s notes, director’s comments, and even transcripts from review and approval sessions.

- File Logistics: Finally, the system knows the practical details. Where is the file located (on-premise server, AWS, Azure)? Is there a high-res version and a low-res proxy? It has the tools to mount cloud storage on demand to make the files accessible for production.

Component 2: The LLM as the Ultimate Librarian

This is where the magic happens. The prompt box is connected to a Large Language Model (LLM) that has been trained on this massive, interconnected database. The LLM acts as the ultimate librarian—one that you can talk to. It understands natural language, nuance, and creative intent.

You can move from simple, literal searches to complex, creative requests:

- Simple: “Show me all the 4K drone shots of a coastline at sunset.”

- More Specific: “Find all the interviews with Dr. Evans from the ‘Future Forward’ project where she talks about AI and sounds optimistic.”

- Truly Creative: “I’m cutting a 15-second social media promo for our summer campaign. I need a series of fast-paced, energetic shots from the ‘Summer Sizzle’ project, preferably with a shallow depth of field and a warm color palette. Show me options that build in intensity.”

Component 3: The Intelligent, Actionable Response

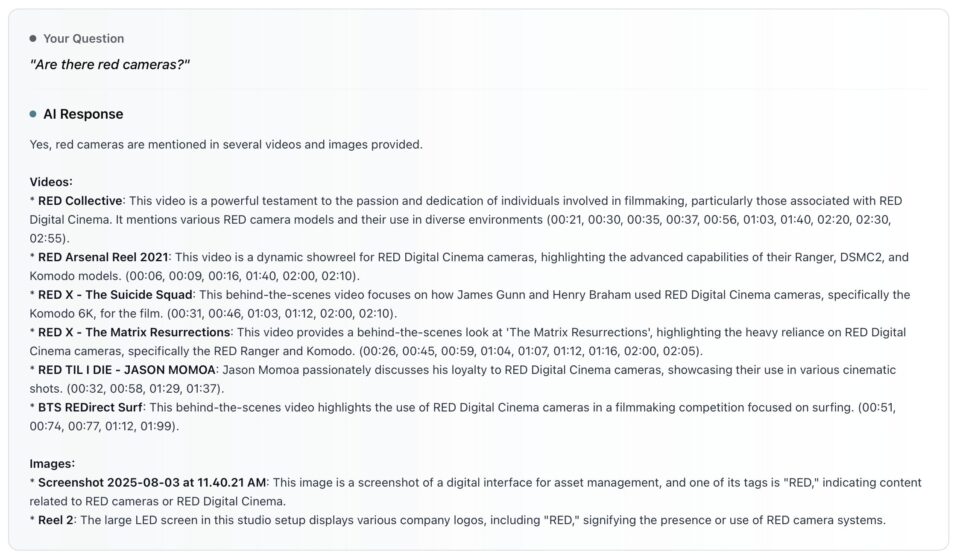

What you get back is not just a bin of 50 clips. The system provides a curated, intelligent response:

- Curated Clips: A selection of the most relevant clips, presented with playable proxies and high-quality thumbnails.

- Timestamp Direct Access: The response includes exact, clickable timecodes to instantly play video from the relevant moment.

- Natural Language Explanations: Context explanation for why each clip was selected. For example: “Here are 12 clips that match your request. The first four have a strong sense of energy and a shallow depth of field. The next five feature a warmer color palette, and the final three are from the same shoot and could be cut together to build intensity.”

- Direct File Access: Direct links to the high-resolution files, with a button to mount the necessary cloud storage or network volume.

- Creative Suggestions: Alternative shots that you might not have considered.

The system doesn’t just return a list of files. It provides a curated, useful response designed to accelerate the creative process.

Key AI Analysis Capabilities: Seeing the Unseen

Key AI Analysis Capabilities: Seeing the Unseen

The engine driving this revolution is a deep stack of AI models that analyze every frame and pixel, creating a rich, searchable context that was previously impossible.

For Your Video Library:

- Visual Analysis: The AI doesn’t just see pixels; it understands content. It identifies actions, objects, logos, and people, turning your entire archive into a queryable database of visual information.

- Transcription: Every spoken word is converted to text and time-coded. Searching for a specific phrase is no longer a guessing game; it’s a precise command that takes you to the exact moment in the video.

- Scene Detection: The system automatically logs every shot change, allowing you to navigate long clips with ease and find the perfect transition point.

- AI Insights: Beyond simple tagging, the AI generates concise summaries, identifies key insights, and creates intelligent smart tags for every video, adding a layer of understanding that helps you find not just what you asked for, but what you truly need.

For Your Image Library:

- Visual Analysis: Similar to video, images are deconstructed to identify objects, faces, landmarks, and brand logos.

- Text OCR: Any printed or handwritten text within an image is extracted and indexed, making signs, documents, and screen captures fully searchable.

- Composition Analysis: The AI understands the art within the image, analyzing its color palette and the compositional rules at play.

- Web Detection: Your images are cross-referenced with the web to find similar images, identify web entities, and provide best-guess labels, adding a world of context to your internal library.

- AI Insights: Every image gets a concise summary and a set of smart tags, making your entire photo library as easy to search as a conversation.

A New Era of Creative Velocity

This shift does more than just save time; it fundamentally reallocates your most valuable resource: creative energy. By automating the tedious, administrative tasks that bog down production, the new AI-powered system allows creatives to spend more time creating.

Imagine a workflow where 95% of your time is spent on creative work and only 5% on searching and administration, a dramatic reversal of the 40% often lost to the friction of traditional MAMs. This is the promise of creative velocity.

- From Search to Discovery: You’ll uncover hidden gems in your archive that would have been lost forever. That perfect shot from six years ago that no one tagged correctly is now instantly accessible.

- The Speed of Thought: The time between having a creative idea and executing it collapses. You can try out more ideas, experiment more freely, and spend your time telling stories, not managing data.

- Democratization of Access: A junior editor or a producer can now find what they need as easily as a senior archivist. You no longer need to know the “right” way to search. You just need to be able to describe what you want.

The traditional MAM is not just becoming obsolete; it’s being absorbed, its functions integrated into a smarter, more intuitive, and vastly more powerful intelligence layer. The future of media management is not about organizing files. It’s about understanding content. The future is a conversation, and it all starts with a single prompt.