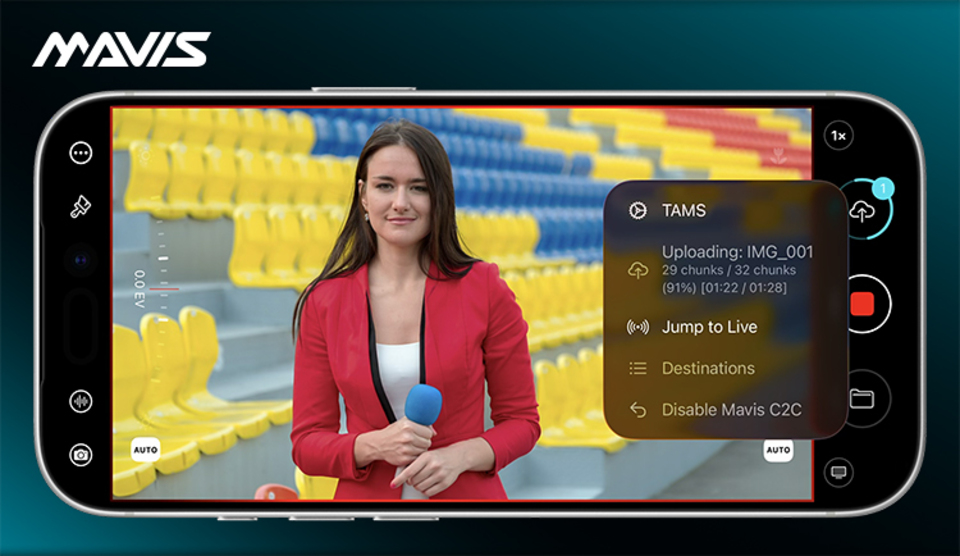

Mavis has introduced a new beta integration between Mavis Camera and TAMS as part of its Camera to Cloud service.

The new workflow allows video captured in Mavis Camera to upload directly to TAMS in real time. As a result, the iPhone can now serve as a professional capture device for fast-turnaround, cloud-native production.

The company says this workflow builds on its existing Camera to Cloud model. Instead of sending media as one continuous stream, Mavis uploads content progressively in separate chunks.

Mavis Camera and TAMS workflow

Mavis says the chunk-based approach helps content keep moving even when network conditions are limited or unstable. At the same time, the workflow keeps the timing data and metadata needed to rebuild a clear timeline later in production.

According to the company, this is one of the key reasons the integration matters for cloud-based editorial workflows.

What TAMS does in the Mavis workflow

TAMS, which BBC R&D developed, uses an open API model for storing and accessing media. Instead of relying on large file-based structures or continuous streams, it works with time-addressable chunks stored in object storage.

Mavis says this approach fits well with its own Camera to Cloud method. Therefore, the integration creates a more flexible route for real-time acquisition and later editorial use.

Jump to live for fast-turnaround production

The beta proof of concept also adds a jump-to-live function. If network performance slows and uploads begin to queue, the upload head can move forward to the newest live material.

As a result, the latest content reaches the destination first, while older queued sections continue to upload afterward. Mavis says this makes the workflow especially useful for news, live events, and other fast-turnaround environments where speed is critical.

Mavis Camera, TAMS, and external inputs

Mavis says the new TAMS integration also works with the expanding range of external inputs supported by Mavis Camera. Users can connect devices such as the Atomos Ninja Phone or Accsoon SeeMo series to bring HDMI or SDI sources into the workflow.

In addition, NDI input support allows IP-based video sources to enter the same TAMS pipeline. Because of that, the workflow is not limited to the iPhone’s internal camera. Instead, Mavis positions the app as a bridge between mobile, external, and networked production setups.

Patrick Holroyd on the Mavis TAMS integration

Patrick Holroyd, CEO of Mavis, said the integration shows how cloud-native and fast-turnaround workflows can move onto devices users already own. He said the connection between Mavis Camera and TAMS demonstrates a practical way to capture on an iPhone, upload timed media segments in real time, and support editorial workflows without relying on traditional streaming infrastructure.