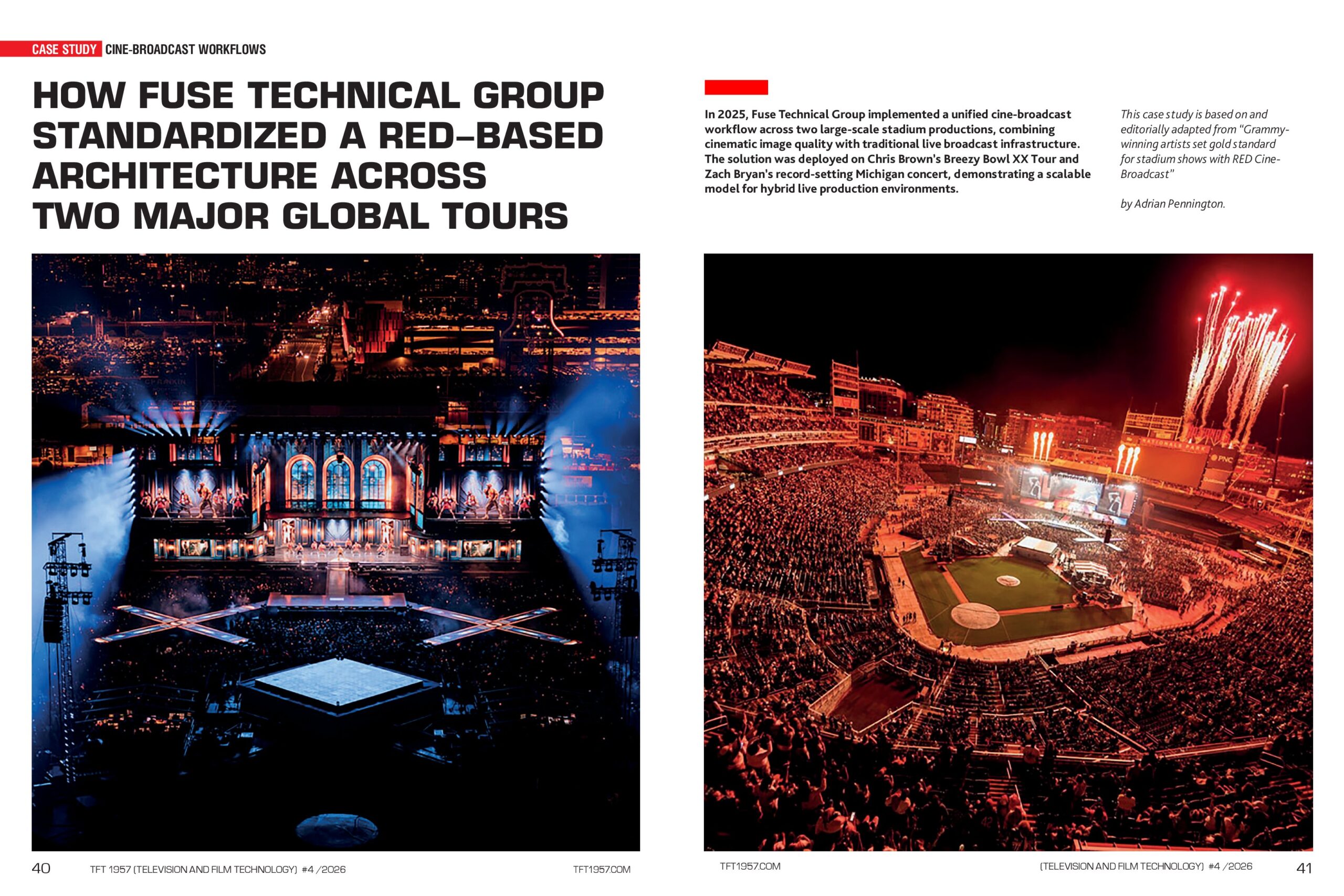

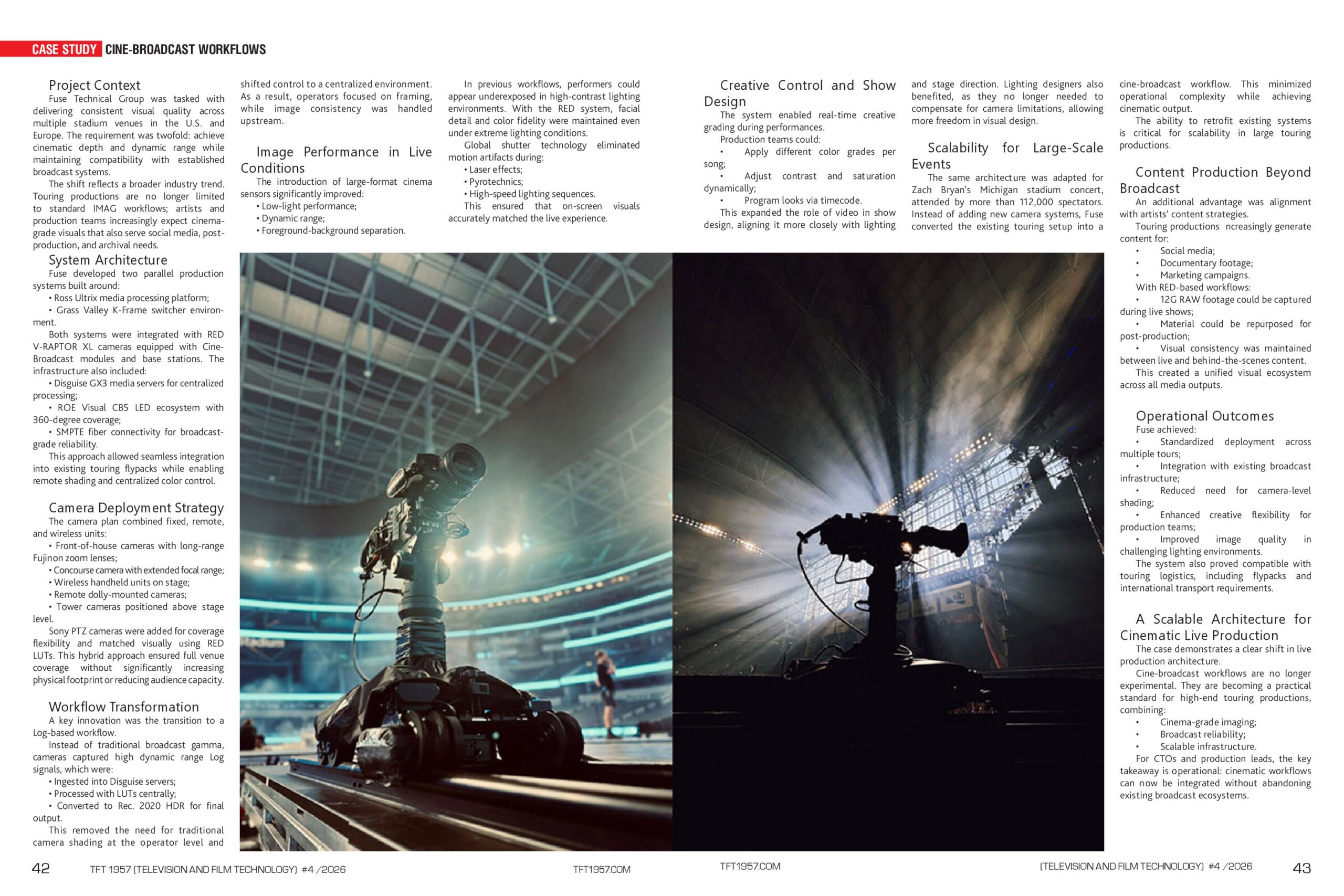

In 2025, Fuse Technical Group implemented a unified cine-broadcast workflow across two large-scale stadium productions, combining cinematic image quality with traditional live broadcast infrastructure. The solution was deployed on Chris Brown’s Breezy Bowl XX Tour and Zach Bryan’s record-setting Michigan concert, demonstrating a scalable model for hybrid live production environments.

This article was published in TFT1957 | TV & Film Technology Magazine, April 2026, Issue 792.

This case study is based on and editorially adapted from “Grammy-winning artists set gold standard for stadium shows with RED Cine-Broadcast” by Adrian Pennington.

Project Context

Fuse Technical Group was tasked with delivering consistent visual quality across multiple stadium venues in the U.S. and Europe. The requirement was twofold: achieve cinematic depth and dynamic range while maintaining compatibility with established broadcast systems.

The shift reflects a broader industry trend. Touring productions are no longer limited to standard IMAG workflows. Artists and production teams increasingly expect cinema-grade visuals that also serve social media, post-production, and archival needs.

System Architecture

Fuse developed two parallel production systems built around:

- Ross Ultrix media processing platform.

- Grass Valley K-Frame switcher environment.

Both systems were integrated with RED V-RAPTOR XL cameras equipped with Cine-Broadcast modules and base stations. In addition, the infrastructure included:

- Disguise GX3 media servers for centralized processing.

- ROE Visual CB5 LED ecosystem with 360-degree coverage.

- SMPTE fiber connectivity for broadcast-grade reliability.

This approach allowed seamless integration into existing touring flypacks while enabling remote shading and centralized color control.

Camera Deployment Strategy

The camera plan combined fixed, remote, and wireless units:

- Front-of-house cameras with long-range Fujinon zoom lenses.

- Concourse camera with extended focal range.

- Wireless handheld units on stage.

- Remote dolly-mounted cameras.

- Tower cameras are positioned above the stage level.

Sony PTZ cameras were added for coverage flexibility and matched visually using RED LUTs. This hybrid approach ensured full venue coverage without significantly increasing physical footprint or reducing audience capacity.

Workflow Transformation

A key innovation was the transition to a Log-based workflow. Instead of traditional broadcast gamma, cameras captured high dynamic range Log signals, which were:

- Ingested into Disguise servers.

- Processed with LUTs centrally.

- Converted to Rec. 2020 HDR for final output.

This removed the need for traditional camera shading at the operator level and shifted control to a centralized environment. As a result, operators focused on framing, while image consistency was handled upstream.

Image Performance in Live Conditions

The introduction of large-format cinema sensors has significantly improved:

- Low-light performance.

- Dynamic range.

- Foreground-background separation.

In previous workflows, performers could appear underexposed in high-contrast lighting environments. With the RED system, facial detail and color fidelity were maintained even under extreme lighting conditions.

Global shutter technology eliminated motion artifacts during:

- Laser effects.

- Pyrotechnics.

- High-speed lighting sequences.

This ensured that on-screen visuals accurately matched the live experience.

Creative Control and Show Design

The system enabled real-time creative grading during performances.

Production teams could:

- Apply different color grades per song.

- Adjust contrast and saturation dynamically.

- The program looks via timecode.

This expanded the role of video in show design, aligning it more closely with lighting and stage direction. Lighting designers also benefited, as they no longer needed to compensate for camera limitations, allowing more freedom in visual design.

Scalability for Large-Scale Events

Scalability for Large-Scale Events

The same architecture was adapted for Zach Bryan’s Michigan stadium concert, attended by more than 112,000 spectators. Instead of adding new camera systems, Fuse converted the existing touring setup into a cine-broadcast workflow. This minimized operational complexity while achieving cinematic output.

The ability to retrofit existing systems is critical for scalability in large touring productions.

Content Production Beyond Broadcast

An additional advantage was alignment with artists’ content strategies.

Touring productions increasingly generate content for:

- Social media.

- Documentary footage.

- Marketing campaigns.

With RED-based workflows:

- 12G RAW footage could be captured during live shows.

- Material could be repurposed for post-production.

- Visual consistency was maintained between live and behind-the-scenes content.

This created a unified visual ecosystem across all media outputs.

Operational Outcomes

Fuse achieved:

- Standardized deployment across multiple tours.

- Integration with existing broadcast infrastructure.

- Reduced need for camera-level shading.

- Enhanced creative flexibility for production teams.

- Improved image quality in challenging lighting environments.

The system also proved compatible with touring logistics, including flypacks and international transport requirements.

A Scalable Architecture for Cinematic Live Production

The case demonstrates a clear shift in live production architecture. Cine-broadcast workflows are no longer experimental. They are becoming a practical standard for high-end touring productions, combining:

- Cinema-grade imaging.

- Broadcast reliability.

- Scalable infrastructure.

For CTOs and production leads, the key takeaway is operational: cinematic workflows can now be integrated without abandoning existing broadcast ecosystems.

https://tkt1957.com/tft1957-tv-film-tech-magazine-special-edition-for-nab-show-2026