Jeff Greenberg, CEO of J Greenberg Consulting, examines how AI-generated tools are reshaping post-production workflows. As non-developers begin building functional software through “vibe coding,” the piece explores the shift from traditional development to AI-driven creation. It also examines the operational risks and implications this introduces for production environments.

This article was published in TFT1957 | TV & Film Technology Magazine, April 2026, Issue 792.

The New Reality

Every developer and post-production professional I know has embraced AI tools to accelerate their coding output. But something more significant is happening alongside that. People with little formal knowledge of software development are now building functional products and bringing them to market.

From AI-Assisted Coding to Vibe Coding

When the AI does 100% of the work, the term for this is vibe coding. You describe what you want to an AI, and it writes the entire codebase. You prompt, test, and iterate, but you are not writing code yourself. This is distinct from AI-assisted development, where a skilled programmer uses AI to speed up work they already understand.

The distinction matters because it lowers the barrier to the exact tools being made for your exact needs. It also determines how much the builder can evaluate, debug, and maintain what they have made.

I am in this category myself. I built my first tool in October 2024: a failed attempt to fix a track issue in Premiere Pro. As of March 2026, I have built several tools, including a macOS screen utility that already has requests for a Windows port. I do not fully understand the underlying platform code in either case. For the record, I am not a complete coding neophyte; I originally studied mathematics before the discipline was called computer science (in a different century!). But I am relying on Claude to build things I could not build alone, and I am far from the only one.

The Scale of AI Tool Creation in Post-Production

This is widespread and very public. I moderate five video editing subreddits with over a million combined members, including r/editors, and I am seeing 10 to 20 new tool submissions per week. People offering utilities that solve specific production problems, often with a commercial angle. Some are DCTLs. Some are metadata utilities. A large portion are captions and visual recognition tools.90% of these are vibe-coded. There is nothing wrong with that, but it changes the risk profile of the tools your facility may be adopting.

The tools themselves. If these tools are not on your radar yet, they should be. Claude Code and ChatGPT Codex are the two leading AI coding engines. They integrate with development environments like VSCode, Cursor, and Kiro, where users describe problems in plain English and get functional code back. The barrier to entry for building production tools has dropped to nearly zero.

Why Post-Production Is Uniquely Ripe for This

Why Post-Production Is Uniquely Ripe for This

You know the joke. Somebody looks at 17 competing standards and says we should unify them. They built a new one, and now there are 18 standards.

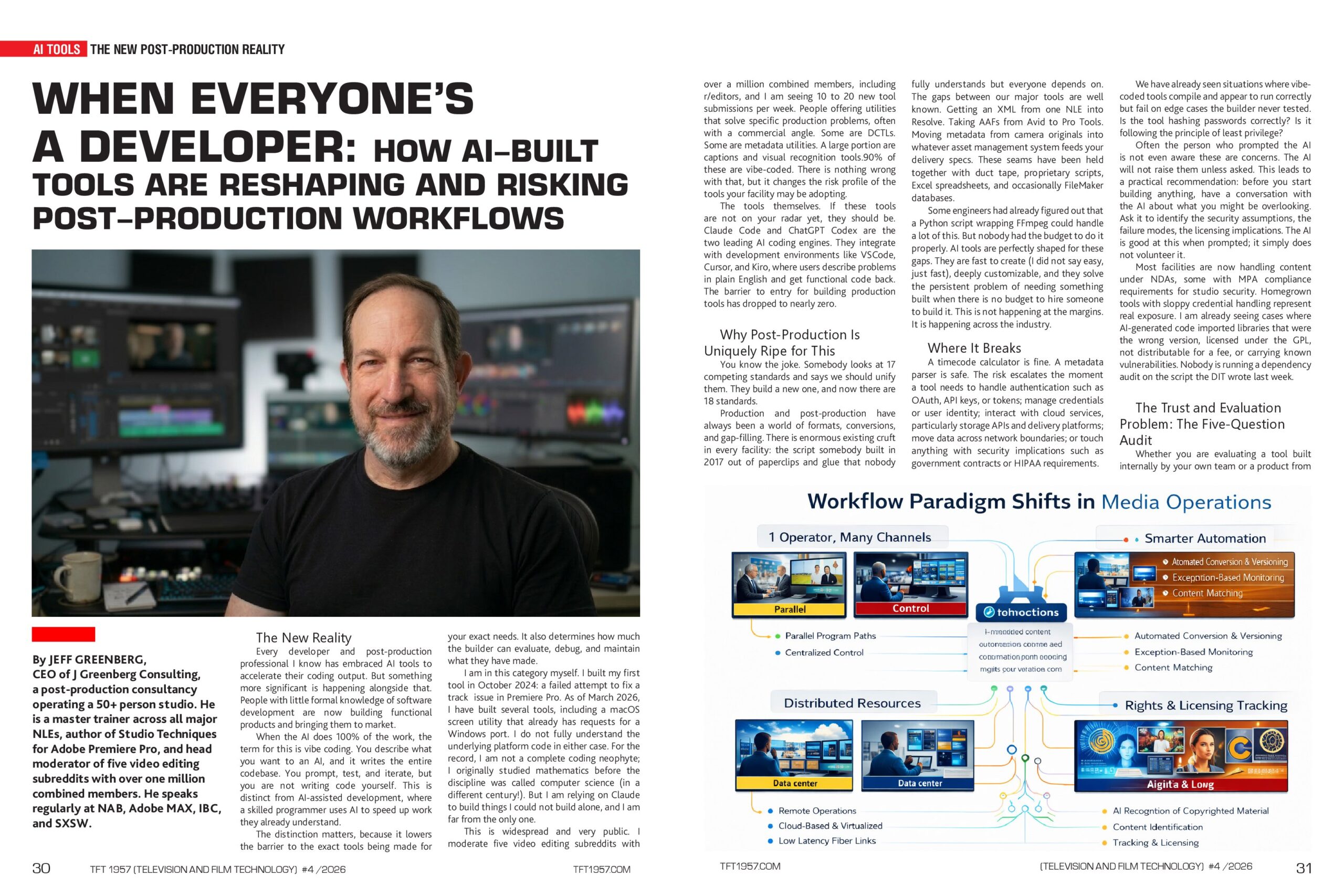

Production and post-production have always been a world of formats, conversions, and gap-filling. There is enormous existing cruft in every facility: the script somebody built in 2017 out of paperclips and glue that nobody fully understands but everyone depends on. The gaps between our major tools are well known. Getting an XML from one NLE into Resolve. Taking AAFs from Avid to Pro Tools. Moving metadata from camera originals into whatever asset management system feeds your delivery specs. These seams have been held together with duct tape, proprietary scripts, Excel spreadsheets, and occasionally FileMaker databases.

Some engineers had already figured out that a Python script wrapping FFmpeg could handle a lot of this. But nobody had the budget to do it properly. AI tools are perfectly shaped for these gaps. They are fast to create (I did not say easy, just fast), deeply customizable, and they solve the persistent problem of needing something built when there is no budget to hire someone to build it. This is not happening at the margins. It is happening across the industry.

Where It Breaks

A timecode calculator is fine. A metadata parser is safe. The risk escalates the moment a tool needs to handle authentication, such as OAuth, API keys, or tokens; manage credentials or user identity; interact with cloud services, particularly storage APIs and delivery platforms; move data across network boundaries; or touch anything with security implications, such as government contracts or HIPAA requirements.

We have already seen situations where vibe-coded tools compile and appear to run correctly but fail on edge cases that the builder never tested. Is the tool hashing passwords correctly? Is it following the principle of least privilege?

Often, the person who prompted the AI is not even aware that these are concerns. The AI will not raise them unless asked. This leads to a practical recommendation: before you start building anything, have a conversation with the AI about what you might be overlooking. Ask it to identify the security assumptions, the failure modes, and the licensing implications. The AI is good at this when prompted; it simply does not volunteer it.

Most facilities are now handling content under NDAs, some with MPA compliance requirements for studio security. Homegrown tools with sloppy credential handling represent real exposure. I am already seeing cases where AI-generated code imported libraries that were the wrong version, licensed under the GPL, not distributable for a fee, or carrying known vulnerabilities. Nobody is running a dependency audit on the script the DIT wrote last week.

TFT1957 | TV & Film Tech Magazine Special Edition for NAB Show 2026

The Trust and Evaluation Problem: The Five-Question Audit

Whether you are evaluating a tool built internally by your own team or a product from an outside developer or small startup, you need a consistent set of questions. These apply equally to a $200/month SaaS product and to the script your assistant editor wrote over a weekend.

- Who maintains this if you disappear? For a solo developer: is this a codebase they will contractually hand over? Is it well commented and documented? Is it in a repository? If the answer is “it’s on my laptop,” that tells you everything you need to know.

- What happens when an API or platform changes? Every tool that touches an external service is one API deprecation away from breaking. What roadmap does the developer have for ongoing compatibility, or is this a snapshot in time?

- How was this tested? Not “does it work,” but “what did you try to break, and what happened?” If the developer cannot articulate their failure conditions, they have not looked for them.

- What are the security implications? Does it store credentials? Does it store personal information? Does it phone home or require network access? Anything touching content or client data demands clear answers here.

- What is your update and support model? Is this a living product with version numbers and change logs, or is it a moment-in-time release? Is there a way to report bugs beyond a Discord server?

These questions are a minimum bar. The goal is to determine how far down the rabbit hole the developer thought about their own product before shipping it.

The Institutional Knowledge Problem

Facilities have always accumulated tools silently. Scripts, macros, batch files, and small utilities that were never catalogued, never documented, and never tracked for dependencies. Then one day, an OS patch, a Python library gets deprecated, and three tools break at once. Nobody knows why, because nobody tracked what depended on what.

AI-built tools make this worse in two specific ways. First, a vibe-coded tool may only exist in the builder’s head, or more precisely, in the AI’s context window at the moment it was created. The builder may not functionally understand the code. They described what they wanted and got something that works, but they may not know the underlying languages or libraries involved. If that person leaves, gets sick, or goes on vacation, the tool becomes a black box.

Second, the documentation problem is severe. With a major vendor tool, there is almost always a manual, a help reference, training sessions, and a support line. With homegrown or small-developer tools, there is often nothing. A barely maintained Notion page at best.

Documentation gets attention only when there is enough complaining, or when the lack of it starts preventing sales or burdening corporate training. The person who built the tool is the documentation and the support team, and that single point of failure is a real operational risk.

A Framework for Responsible Adoption

A boutique color house has different needs than a large broadcast facility, which has different needs than a four-person shop embedded in a corporation. Rather than rigid rules, here are principles that scale to your situation.

Scope determines risk. A self-contained tool with no network access and no external dependencies is in a fundamentally different risk category than one requiring authentication against cloud services. Evaluate accordingly.

If it touches your pipeline, it needs an owner. Not the person who built it, but a designated maintainer accountable for its continued operation. I would argue it needs two people and thorough documentation, because if the sole maintainer leaves, you will not know what to do when it breaks. Document at the point of creation, not later. Later never comes.

If an AI built the tool, have the AI generate the documentation. Ask the AI to produce a plain-English explanation of what each component does. This is one of the best places where AI can help solve the problems it creates.

Test for failures, not just success. Feed it bad inputs. Feed it edge cases. Feed it the file a client sent at 2:00 AM with a mangled filename and see what happens. If it fails silently, that is sometimes worse than failing loudly.

Separate shims from infrastructure. A tool that solves a one-time problem is fine to build fast and loose. A tool that becomes part of your daily workflow needs to be treated like infrastructure: versioned, backed up, and maintained by someone other than the original creator.

Apply the five questions to yourself and your team. The vendor evaluation questions above apply equally to your own internal tools. If you cannot answer them about something you built, you are not ready to depend on it.

Jeff Greenberg will take part in ‘Visionaries. Scripted by Silicon: AI’s Role in TV and Cinema’

Looking Forward

None of this should read as fear. This is an empowering moment for the industry. The ability to build custom tools quickly is one of the most practical things AI has delivered to our field. Facilities that learn to harness it intelligently will have a competitive advantage: fewer friction points, faster turnaround, and more time for their artists to focus on creative work. One note on that last point: discovered time should be spent on better creativity, not on tightening schedules.

The facilities that let this grow unchecked will find themselves dependent on a tangle of undocumented, unmaintained, poorly secured tools that nobody fully understands. In a professional environment where reliability is the product, that is a serious risk. The answer is not to say no. It is to say yes, with open eyes.

About Jeff Greenberg

Jeff Greenberg is CEO of J Greenberg Consulting, a post-production consultancy operating a 50+ person studio. He is a master trainer across all major NLEs, author of Studio Techniques for Adobe Premiere Pro, and head moderator of five video editing subreddits with over one million combined members. He speaks regularly at NAB, Adobe MAX, IBC, and SXSW.