This article was published in TFT1957 | TV & Film Technology Magazine, April 2026, Issue 792.

Every major technological advancement promises happy changes to operational workflows. What follows are some of the most interesting -REAL- recent examples of change in media operations:

- One operator for MANY channels.

- Automated conversion and versioning.

- Geographically distributed resources.

- Rights and Licensing Tracking.

One for Many

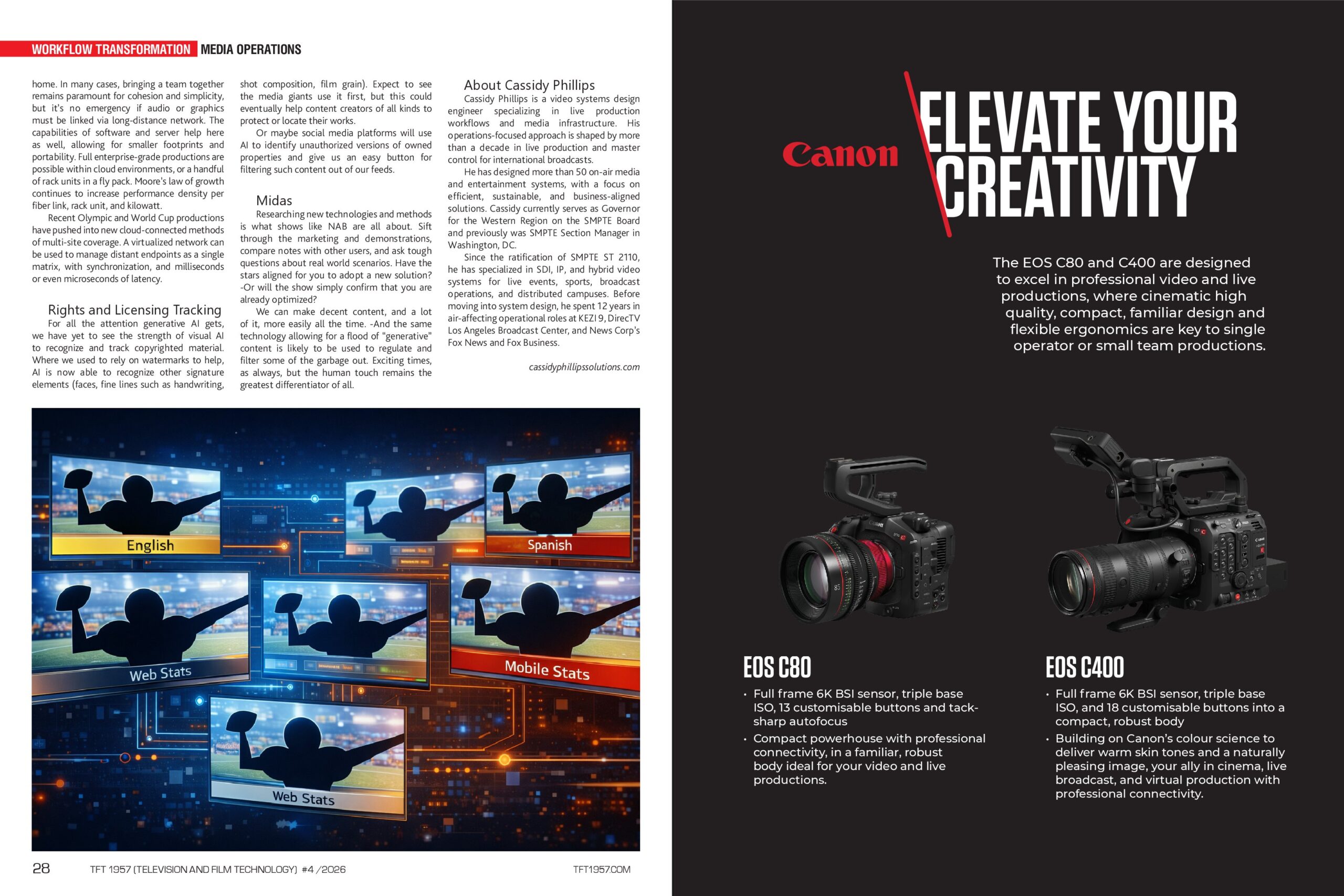

From channel in a box to micro services and shared memory, we now have a great variety of ways to power broadcast media channel creation. It is easier than ever to manage parallel program paths and maintain synchronization. Live sports coverage can feature regionalized graphics (or even camera selections) in addition to local ad-breaks. Just a few years ago, such complexity might have required wildly different infrastructure to support each channel variant and result in asynchronous outputs.

The latest advances in computing allow for lower latency and better control over synchronization. Splitting audio, video, and data into separate essences makes it easier to economize alternate language streams and closed captions through a network. The fact that one enterprise server can host multiple HD services (with associated switching, DVE, and graphics) is a fundamental tool.

Smarter Automation

Powerful servers also allow for more rules engines, or even “AI” assisted rules, to detect and direct media through a system. It is one thing to recognize “1080p” video; it is a great advancement to recognize that the content of the video is the same as other files or streams in your system (regardless of their resolutions). Content matching is being deployed in file and live workflows today, reducing storage clutter and enhancing exception-based monitoring.

Why bother storing separate versions of a recording for each resolution and frame rate when you can automatically convert -from a great mezzanine file- on the fly? Similarly, human eyes can be reserved for the highest quality output of a system, while content matching monitors the alternate versions in the background. In a world with more screens and distribution formats to match, secondary eyes are appreciated.

Distributed Resources

If the pandemic taught our industry one thing, it was the reality of remote production and operation. We now know, with absolute certainty, that we can do almost anything from home. In many cases, bringing a team together remains paramount for cohesion and simplicity, but it’s no emergency if audio or graphics must be linked via a long-distance network.

The capabilities of software and server help here as well, allowing for smaller footprints and portability. Full enterprise-grade productions are possible within cloud environments, or a handful of rack units in a fly pack. Moore’s law of growth continues to increase performance density per fiber link, rack unit, and kilowatt.

Recent Olympic and World Cup productions have pushed into new cloud-connected methods of multi-site coverage. A virtualized network can be used to manage distant endpoints as a single matrix, with synchronization, and milliseconds or even microseconds of latency.

Rights and Licensing Tracking

Rights and Licensing Tracking

For all the attention generative AI gets, we have yet to see the strength of visual AI to recognize and track copyrighted material. Where we used to rely on watermarks to help, AI is now able to recognize other signature elements (faces, fine lines such as handwriting, shot composition, film grain). Expect to see the media giants use it first, but this could eventually help content creators of all kinds to protect or locate their works.

Or maybe social media platforms will use AI to identify unauthorized versions of owned properties and give us an easy button for filtering such content out of our feeds.

Midas

Researching new technologies and methods is what shows like NAB are all about. Sift through the marketing and demonstrations, compare notes with other users, and ask tough questions about real-world scenarios. Have the stars aligned for you to adopt a new solution? -Or will the show simply confirm that you are already optimized?

We can make decent content, and a lot of it, more easily all the time. -And the same technology allowing for a flood of “generative” content is likely to be used to regulate and filter some of the garbage out. Exciting times, as always, but the human touch remains the greatest differentiator of all.

TFT1957 | TV & Film Tech Magazine Special Edition for NAB Show 2026

About Cassidy Phillips

Cassidy Phillips is a video systems design engineer specializing in live production workflows and media infrastructure. His operations-focused approach is shaped by more than a decade in live production and master control for international broadcasts.

He has designed more than 50 on-air media and entertainment systems, with a focus on efficient, sustainable, and business-aligned solutions. Cassidy currently serves as Governor for the Western Region on the SMPTE Board and previously was SMPTE Section Manager in Washington, DC.

Since the ratification of SMPTE ST 2110, he has specialized in SDI, IP, and hybrid video systems for live events, sports, broadcast operations, and distributed campuses. Before moving into system design, he spent 12 years in air-affecting operational roles at KEZI 9, DirecTV Los Angeles Broadcast Center, and News Corp’s Fox News and Fox Business.

https://www.cassidyphillipssolutions.com/