Developed when the major broadcast technology companies circled their wagons to prevent a “video over IP arms race”, SMPTE ST 2110 was designed to help bring SDI into IP with interoperability across manufacturers.

Developed when the major broadcast technology companies circled their wagons to prevent a “video over IP arms race”, SMPTE ST 2110 was designed to help bring SDI into IP with interoperability across manufacturers.

While it sounds like a noble cause, there were many motivating factors, but we can discuss political history another time… Now, some of the same folks who wrote the standard are among those claiming “ST 2110 is dead”. They are wrong, and they likely know it, so what’s with all the misinformation, and what is right for your business?

There is one common request through all of my thousands of engagements with enterprise media production companies: ultimate quality and minimal latency. ST 2110 was designed to meet these requirements, but you may be hearing some interesting stories…

Lie #1 – ‘People Are Not Choosing ST 2110’

Lie #1 – ‘People Are Not Choosing ST 2110’

‘We are seeing more people choose formats other than ST 2110 for live production!’ This is a half-truth based upon the growing number of Tier 2 and 3 production systems. What’s the reality for Tier 1?

ST 2110, deployed for modern technical landmarks and 16k international sports coverage, has not been exhausted. The standard allows for any aspect ratio within 32k by 32k, countless audio streams, and ancillary data streams to cover legacy information and whatever may come next (insert smell-o-vision jokes per usual). The end is not in sight as of IBC 2025.

Production teams covering live events with SDI were among the earliest adopters of ST 2110. To briefly rehash and summarize: UHD required four times the SDI equipment of HD, making it heavy, power-hungry, and clunky. Packetized UHD over IP, with COTS switch cores, greatly reduced the physical size of the infrastructure and removed points of failure (distribution amplifiers, electrical-optical, large SDI router frames…). Annnnd nothing has changed; premium live events coverage is STILL going ST 2110.

Several traditional television broadcast companies have publicly gone with ST 2110 as well, from Disney to Warner Bros. Discovery to Sinclair and Hearst… UHD and HDR were motivating factors here, just as in live events production (often broadcast by these companies). The “renaissance of scripted television” has also given us hundreds of hours of content that had been carefully fashioned more like Hollywood films than TV shows of the 20th century. Home viewers (and 4k television sales) demand higher quality to view this “cinema”. Sure, the stream is only a few smashed Mbps by the time it gets to your living room, but content creators want to “protect the mezzanine” for as much of the production-to-consumer path as possible. Lightly compressed JPEG-XS, sometimes even of the ST 2110-22 variety, has been embraced for routing by a few companies.

Several traditional television broadcast companies have publicly gone with ST 2110 as well, from Disney to Warner Bros. Discovery to Sinclair and Hearst… UHD and HDR were motivating factors here, just as in live events production (often broadcast by these companies). The “renaissance of scripted television” has also given us hundreds of hours of content that had been carefully fashioned more like Hollywood films than TV shows of the 20th century. Home viewers (and 4k television sales) demand higher quality to view this “cinema”. Sure, the stream is only a few smashed Mbps by the time it gets to your living room, but content creators want to “protect the mezzanine” for as much of the production-to-consumer path as possible. Lightly compressed JPEG-XS, sometimes even of the ST 2110-22 variety, has been embraced for routing by a few companies.

I have yet to hear of a tier 1 production, program, or broadcast channel relying upon a lower quality format. And yes, higher compression means lower quality, no matter what marketing language they use. No client involved with a tier 1 production has asked for anything less than ST 2110 since at least 2019. Which brings our heads into the clouds…

Lie #2 – ‘You Must Sacrifice Latency for Cloud’

Lie #2 – ‘You Must Sacrifice Latency for Cloud’

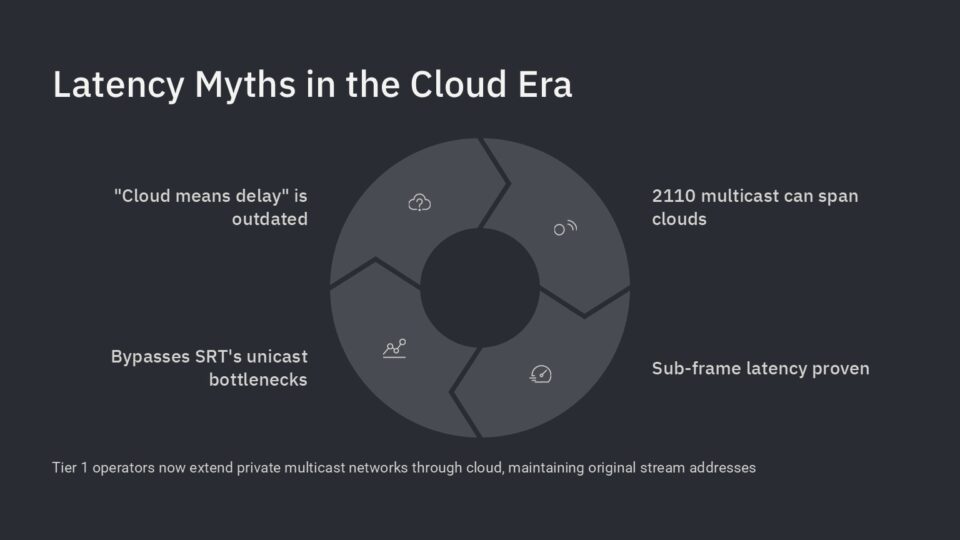

‘You have to compress and encode your video from transport to and from the cloud, and the trip will add seconds of latency to your workflow’…

Our friends in the VSF recently voted to make SRT the method of choice for ground-to-cloud and cloud-to-ground video links. Makes sense: tried and true, trusted… So why are all the biggest live media companies omitting “SRT” when asking for expansion of their router into the clouds? Because they want to extend their private network through the cloud, retain the original stream addresses, minimize latency, and minimize points of failure. SRT is great for many things, perfect for sharing streams between separate rights holders, but it cannot facilitate a seamless extension of your private network. Secure Reliable Transport interrupts the multicast stream and creates something entirely new in a different network (this process is repeated for each link, as unicast, so no one-to-many function either). Why wasn’t this considered in the VSF? Because the VSF is composed of broadcast technology development companies, which each already support SRT, the voice of the content creator is largely absent from that meeting room.

SMPTE ST 2110 multicast has been successfully extended, from ground to cloud and back, in multiple systems since 2024. This has been demonstrated with sub-frame latency over public Internet connections. If this is news to you, you SHOULD ask your friends ‘why?’

Lie #3 – ‘Everything Can Just Be Open Source’

Lie #3 – ‘Everything Can Just Be Open Source’

‘There is no longer a need for proprietary products by broadcast technology vendors’

While the idea of open-source technical solutions sounds good at a glance, it only works if you can motivate smart people to make good contributions to the source. Because we do not live in a global socialist utopia, people must earn money to live. Really good work usually warrants bigger paychecks. Certain powers in media may have the capital to fund such work and even retain special architects… but it leaves smaller players unrepresented and provides opportunities for technological incompatibility between media sources (see NTSC vs PAL and extrapolate). It also removes the traditional liability and support structures much of the industry is built. Hard to point the finger at everyone… If all the significant portions of the source code are from your full-time staff, do you point the finger at yourself?

Lie #4 – ‘Micro-services and Shared Memory can Handle Everything’

Lie #4 – ‘Micro-services and Shared Memory can Handle Everything’

‘Let’s put your entire broadcast workflow in one powerful computer server!’ It is a statement of ever-increasing server capabilities…

Meanwhile, over the past few years, data centers have been slowly adding FPGA resources to improve deterministic behavior and reduce latency. Your home computer is weird, gets a bit unruly at times, and needs a good power cycle to “remember who it is”. Unfortunately, enterprise-grade servers are not without these faults and require careful planning for use in critical workflows. I’m not saying FPGA is infallible, but my FPGA devices probably are not trying to gather my personal shopping preferences while updating thirty background services AND pushing over ten gigabytes-per-second of video data. Buy two, plumb for diversity, and make sure you have easy “swap to back-up” buttons.

A more reasonable approach you may hear is ‘We can dynamically split processes across multiple servers in the form of load balancing!’ Micro-services and containerization allow for some very cool distributed-infrastructure models. With good rules and perhaps some “AI”, most large data centers will soon behave in this way. I can think of several old projects that might have benefited from such an architecture, and there is much to explore in this area. That said… My comments about non-deterministic servers still apply here, and any critical workflow will demand careful planning for failures.

A more reasonable approach you may hear is ‘We can dynamically split processes across multiple servers in the form of load balancing!’ Micro-services and containerization allow for some very cool distributed-infrastructure models. With good rules and perhaps some “AI”, most large data centers will soon behave in this way. I can think of several old projects that might have benefited from such an architecture, and there is much to explore in this area. That said… My comments about non-deterministic servers still apply here, and any critical workflow will demand careful planning for failures.

Lie #5 – ‘The Industry Does Not Need Standards’

Lie #5 – ‘The Industry Does Not Need Standards’

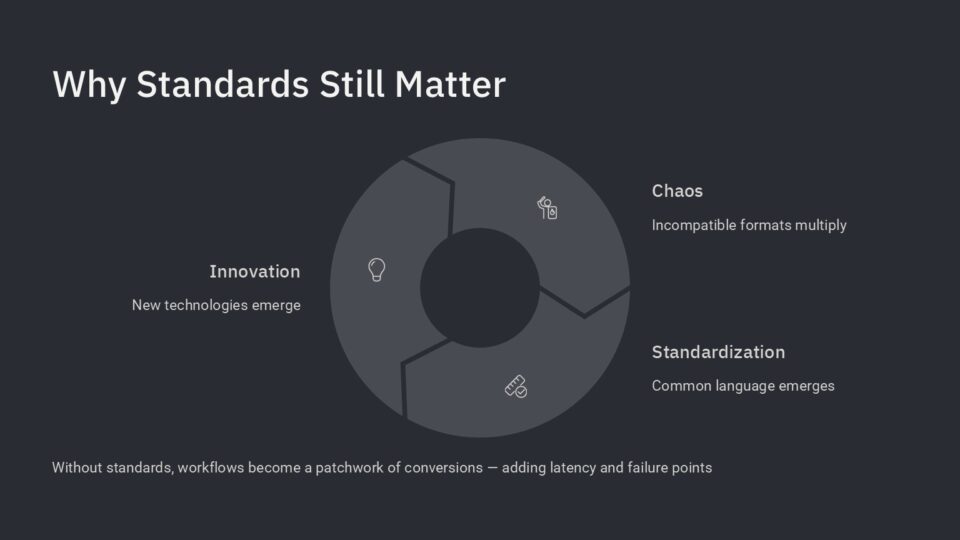

‘Technology is so powerful, you can simply throw anything into a server and use micro-services to translate or convert!’

Apparently, the broadcast industry has a ten-year technology cycle, in which innovation leads to chaos and then order, before another major innovation or push and restart. People telling you we don’t need standards are looking to sell you something non-standard, or format conversion tools. I guess, hardware has evolved such that one powerful server could be filled with micro-services and perform the entire uncompressed “intake, convert, process, edit, branding, monetization, personalization, transmission to CDN” workflow… in Standard Definition. You’re going to have to smash it for UHD… and 8k and 16k are in the wild now.

A stop in the workflow for encode/decode / conversion will always add latency and a point of failure- whether it occurs in an FPGA or a microservice in a server. While there are media giants who must deal with the acquisition and transmission of multiple video formats, it is always best to minimize variables or even settle on a single format for the core. One benefit of COTS architectures is that they do not force us to choose, and it is possible to divide your infrastructure into several networks dedicated to different media types. But this again requires planning and maintenance. We are many years away from a world in which a major international news hub can simply throw streams into a computer network and trust software to rapidly make the proper conversions.

A stop in the workflow for encode/decode / conversion will always add latency and a point of failure- whether it occurs in an FPGA or a microservice in a server. While there are media giants who must deal with the acquisition and transmission of multiple video formats, it is always best to minimize variables or even settle on a single format for the core. One benefit of COTS architectures is that they do not force us to choose, and it is possible to divide your infrastructure into several networks dedicated to different media types. But this again requires planning and maintenance. We are many years away from a world in which a major international news hub can simply throw streams into a computer network and trust software to rapidly make the proper conversions.

Truth: Nothing Stresses I.T. like Video

Truth: Nothing Stresses I.T. like Video

Whether streaming UHD or just trying to render a video file out of your home edit program, nothing beats a computer network harder than video. Our industry is constantly increasing the bandwidth and size of media data, and even the most advanced infrastructures are being pushed to keep up. Some of the authors of ST 2110 knew this. Bigger rasters. Higher frame rates. More audio. More metadata. The standard allows for this. IPMX adds nice details for integration with pro-av and consumer products. When will we hit the 32k wall? 2035 AD?

More Truth: What ST 2110 Cannot Do

More Truth: What ST 2110 Cannot Do

Even an evangelist like myself cannot pretend SMPTE ST 2110 is perfect. Here are a couple of knocks against the documents as they stand:

- Though viewed as an integral piece of most ST 2110 systems, NMOS is actually not included within the official standard documents. This was not an oversight; the priority of the group was simply on transport of media rather than operational switching/orchestration. I was involved in the deployment of several non-NMOS systems, which relied instead upon shared APIs like Ember+, but widespread support for NMOS makes it the easy choice when configuring a routing system.

- ST 2110 was based on SDI. It expands on the capabilities greatly, but it is worth noting that we are dealing with “uncompressed SDI” and not an uncompressed raw output of a premium camera (the UHD bandwidth of RED raw is XX!). There is always a possibility that someone will want to move an even higher-quality mezzanine through their router!

I’m sure other experts have their own gripe list. The standards are written by humans, can be changed by humans, and are likely to evolve as the world (of content creators) demands.

The Ultimate Truth: Content Creators Remain King

The Ultimate Truth: Content Creators Remain King

Media Content creators drive this industry. Make a gorgeous 16k show that everyone wants to see in a pristine fashion- suddenly, we all need new screens in our homes. Want to use AI to create millions of hyper-personalized program feeds for unique viewers? Suddenly, every technology provider is building that solution. We hear a lot of buzzwords and slick phrases, from our social media feeds to trade shows, but ALWAYS ask “why?”… Why does this matter? Who benefits? Is this the best solution to the problem, or is someone just trying to squeeze more ROI out of their legacy development? Is this the right fit for my operation, or is someone just trying to disrupt a paradigm to gain some market share?

Yes, this looks very cool, and thanks for the drink, but explain exactly how this helps my business?

Cassidy Phillips: We’re about to see a virtualized broadcast industry

Who the Heck is this guy?

Cassidy Lee Phillips is an independent consultant with expertise in live operations and enterprise computer networks for critical data. He has worked with hundreds of clients across thousands of engagements, from small classroom systems to large media data centers and ground<>cloud systems, including the successful design and go-live of over forty SMPTE ST 2110 systems. Cassidy is happy to take on your weirdest, hardest, even “dumbest” questions.