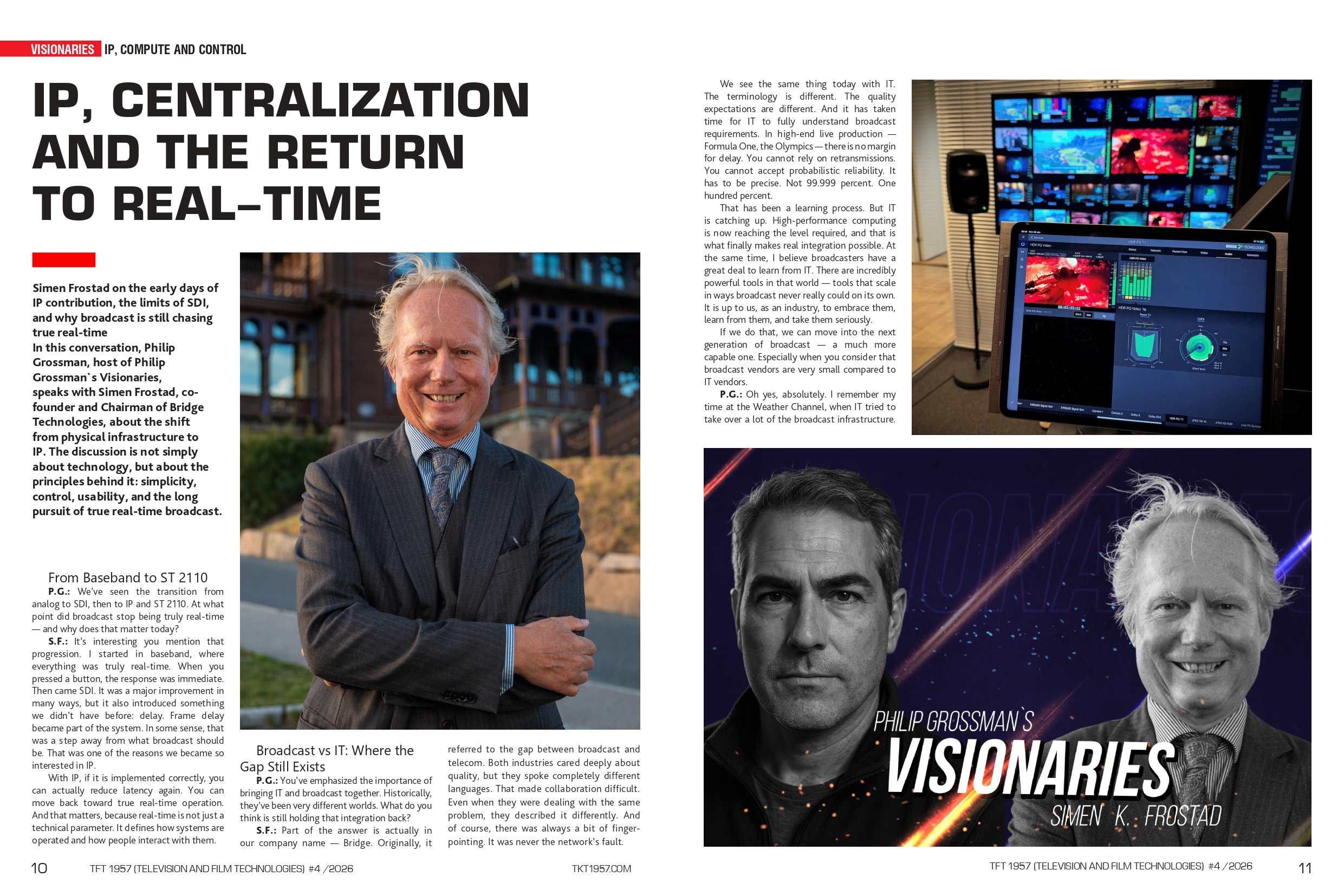

Simen Frostad, co-founder and Chairman of Bridge Technologies, on the early days of IP contribution, the limits of SDI, and why broadcast is still chasing true real-time. In this conversation, Philip Grossman, host of Philip Grossman`s Visionaries, speaks with Simen Frostad about the shift from physical infrastructure to IP. The discussion is not simply about technology, but about the principles behind it: simplicity, control, usability, and the long pursuit of true real-time broadcast.

Simen Frostad, co-founder and Chairman of Bridge Technologies, on the early days of IP contribution, the limits of SDI, and why broadcast is still chasing true real-time. In this conversation, Philip Grossman, host of Philip Grossman`s Visionaries, speaks with Simen Frostad about the shift from physical infrastructure to IP. The discussion is not simply about technology, but about the principles behind it: simplicity, control, usability, and the long pursuit of true real-time broadcast.

This article was published in TFT1957 | TV & Film Technology Magazine, April 2026, Issue 792.

From Baseband to ST 2110

P.G.: We’ve seen the transition from analog to SDI, then to IP and ST 2110. At what point did broadcast stop being truly real-time — and why does that matter today?

S.F.: Interestingly, you mention that progression. I started in baseband, where everything was truly real-time. When you pressed a button, the response was immediate. Then came SDI. It was a major improvement in many ways, but it also introduced something we didn’t have before: delay. Frame delay became part of the system. In some sense, that was a step away from what broadcasting should be. That was one of the reasons we became so interested in IP.

With IP, if it is implemented correctly, you can actually reduce latency again. You can move back toward true real-time operation. And that matters, because real-time is not just a technical parameter. It defines how systems are operated and how people interact with them.

Broadcast vs IT: Where the Gap Still Exists

P.G.: You’ve emphasized the importance of bringing IT and broadcast together. Historically, they’ve been very different worlds. What do you think is still holding that integration back?

S.F.: Part of the answer is actually in our company name — Bridge. Originally, it referred to the gap between broadcast and telecom. Both industries cared deeply about quality, but they spoke completely different languages. That made collaboration difficult. Even when they were dealing with the same problem, they described it differently. And of course, there was always a bit of finger-pointing. It was never the network’s fault.

We see the same thing today with IT. The terminology is different. The quality expectations are different. And it has taken time for IT to fully understand broadcast requirements. In high-end live production — Formula One, the Olympics — there is no margin for delay. You cannot rely on retransmissions. You cannot accept probabilistic reliability. It has to be precise. Not 99.999 percent. One hundred percent.

That has been a learning process. But IT is catching up. High-performance computing is now reaching the level required, and that is what finally makes real integration possible. At the same time, I believe broadcasters have a great deal to learn from IT. There are incredibly powerful tools in that world — tools that scale in ways broadcast never really could on its own. It is up to us, as an industry, to embrace them, learn from them, and take them seriously.

If we do that, we can move into the next generation of broadcast — a much more capable one. Especially when you consider that broadcast vendors are very small compared to IT vendors.

P.G.: Oh, yes, absolutely. I remember my time at the Weather Channel, when IT tried to take over a lot of the broadcast infrastructure. The biggest issue was SLA. For them, it was simple: “Your email is down? Is your database down? Put in a ticket — we’ll get back to you in 30 minutes.”

And we were saying: “No. We are live on air.”

Eventually, they just said, “Okay, you take it back. We can’t meet your SLA requirements.”

S.F.: That’s exactly right. I remember IBC, maybe ten or twelve years ago, when IBM had a huge presence. They were very confident. They were essentially saying: “We are taking over.” The year after, they were gone. Because they realized this was outside both their comfort zone and their capability at the time.

Multicast, Memory, and the Next Layer of Infrastructure

Multicast, Memory, and the Next Layer of Infrastructure

P.G.: I completely agree with your point about compute versus cloud. I tend to avoid the word “hybrid” as well. I prefer to think in terms of fully integrated systems, where everything works together as intended. Which brings me to multicast. I’ve always been a strong supporter of multicast in IP environments. But with the shift toward cloud, multicast has been sidelined. From your perspective, does multicast still matter for low-latency live production?

S.F.: Absolutely. Multicast is actually what made networks truly exciting to me. It allows you to distribute signals to an almost unlimited number of endpoints, as long as you have the bandwidth. It behaves very much like a traditional router. All signals are available, and you can select what you need without affecting anything else. That is still incredibly powerful.

At the same time, we are moving into a new phase. Networks continue to grow, and we will soon be dealing with terabit-scale connectivity. But we are also starting to move processing away from the network itself. Technologies like shared memory architectures, RDMA, and clustered computing allow systems to operate directly within shared memory spaces. Instead of moving data through the network between processes, you can process everything in parallel within the same memory domain.

That reduces latency significantly. And interestingly, even though the underlying systems are becoming more advanced, it can actually reduce operational complexity. You can perform scaling, color correction, and other transformations directly in memory, without constantly moving data back and forth. In a way, multicast made IP behave like a router. And now we are moving toward similar capabilities inside memory itself. All of these developments are connected. They allow us to process faster, more accurately, and most importantly, in parallel.

Visibility, Usability, and the End of the Patch Bay Mindset

P.G.: Why is real-time visibility the critical factor for making IP networks reliable in broadcast operations?

S.F.: One of the key things we realized early on was that IP itself is not the problem. The problem is visibility. If you want people to trust IP for broadcast, they need to understand how the network behaves in real time.

That was actually one of the main reasons we started Bridge Technologies. We had a fairly unique understanding of how packets move through a network, and more importantly, how to visualize that movement in a way that non-engineers could understand. Because if only a handful of experts understand the system, it doesn’t scale. But if operators, not just engineers, can see what’s happening, then suddenly you can build networks that are robust enough for high-end broadcast. That was the trigger.

And from there, we kept refining the tools. At the beginning, we were working with 100 megabit interfaces, which at the time felt enormous. Today, we’re talking about 1, 10, 100, 200, 400, and even 800 gigabits. The scale has changed dramatically. But fundamentally, nothing has changed. It’s still packets moving through a network. The real question is how you stay in control, and how you give people confidence that the system is actually working.

P.G.: One thing I’ve noticed, and I think we share this, is the importance of usability. From what I’ve seen in your products and your philosophy, usability is not secondary for you. But I still hear engineers say that usability doesn’t matter. What are you actually seeing in practice? Do teams really perform better because of improved usability?

S.F.: Absolutely. Without question. Broadcast has always had brilliant engineers, people who can build incredibly sophisticated systems. But the success of broadcasting has never depended only on engineers. It depends on creatives. And when I say creatives, I mean vision mixers, camera shaders, graphics operators, slow-motion operators, and of course, on-air talent. If you can give each of these people a simpler way to improve production quality, that’s the winning formula.

The technical details, honestly, have never been the main thing. They are essential because they are the foundation. But they are still only the foundation. What matters is how the platform is used. That’s what drives audience experience, that’s what drives quality, and that’s what defines the final content. Our approach has increasingly been to enable creatives. Give them better tools. Give them easier access. And when those tools become remotely accessible, something interesting happens: quality goes up, and stress goes down, especially in high-profile productions.

I truly believe that the only way to gain a competitive advantage is to empower users, and in our case, that means operators. It means removing as much unnecessary technical complexity as possible. Not eliminating complexity, because it still exists, but taking it away from the user.

Why We Prefer “Compute” to “Cloud”

P.G.: Why does Bridge Technologies focus on compute rather than the cloud when thinking about the future of broadcast infrastructure?

S.F.: This also connects to another point. In broadcasting, people talk endlessly about the cloud. Cloud this, cloud that. We have never been especially fascinated by that word. We prefer another word: compute.

Because compute is what actually matters. Whether the computer is here or somewhere else is a secondary question. If you have fiber between locations, then the location becomes a design choice. What really changes the game is the shift away from highly specialized embedded systems toward general-purpose compute — toward standard servers.

Blackmagic Design: DaVinci Resolve 19 Public Beta 6 with Blackmagic RAW Support

That is an extraordinary increase in potential performance. CPUs improve every year. Capacity keeps growing. And once your architecture becomes software-based, once your applications can run on general-purpose computing platforms, the advantages become very significant. It also changes the relationship between broadcast and IT. Instead of viewing the IT industry as some kind of invading force, we can use the best tools IT has developed to help broadcasters do more of what broadcasters actually do best: create content. That is the real opportunity.

And for us, this direction is unavoidable. If you are not leading it, then you need to be following it very quickly.

The Olympic Model

P.G.: How did the Olympics help validate remote production as a better long-term model for major live events?

S.F.: The next example, which benefited from everything learned in those events, was the Olympics. A large proportion of the Olympic venues in Paris and elsewhere were handled remotely. That meant most of the deep engineering, most of the shading, and a significant amount of graphics insertion were centralized. And that is really the direction the industry is moving in, with distance remaining the only real limit, because the speed of light is the only real constraint. In practice, the only meaningful latency is distance itself.

One part of it is cost efficiency, of course. If you no longer need to fly large numbers of specialists to a venue for a one-week or two-week event, the savings are considerable. But that is only part of the story. The other part is performance.

When people are centralized, they work in a calmer and more controlled environment. Instead of being packed into an outside broadcast truck, they can work in a proper broadcast center. And in that kind of environment, they simply do a better job. That, to me, is one of the biggest improvements over the older way of delivering major events.

The Next Leap: Remote Expertise, Compute, and AI

The Next Leap: Remote Expertise, Compute, and AI

P.G.: If we look at the evolution of IP infrastructure, we are moving toward 800-gigabit systems. I have 5-gigabit connectivity at home now. From 300-baud modems as a kid to this — it’s incredible. We have HTML5 interfaces. We have remote shading at global events. What is the next big leap?

S.F.: First of all, it is about making remote work truly effective. Right now, there are still things you do not want to do from home. That will change. Because the real goal is to have experts available without needing them to be physically present. They should have all the tools they need to evaluate and act, even from something as simple as a phone. That is a major shift.

The second part is compute. More computing does not just mean more processing power. It also enables augmentation.

P.G.: You mean AI?

S.F.: Yes — but not in the way people usually talk about it. AI is only as good as the data you feed into it. Garbage in, garbage out. The real foundation is not AI itself. It is metrics. If you can provide objective, high-quality, real-time data through APIs, then AI can actually assist operators. And that is where it becomes interesting. Because now the operator becomes more capable. Not replaced — augmented. And that, I believe, is where the next real shift will happen. You may need fewer operators, but they will be far more capable. They will have richer tools. They will perform at a higher level.

But none of that works without proper data. If you do not have high-quality, real-time metrics feeding into your compute layer, then AI cannot deliver meaningful results. At the same time, cost pressure is increasing dramatically, especially in high-end broadcast. We need to counter that with smarter infrastructure, better use of compute, and stronger integration with general IT systems.

Simen K. Frostad: From Broadcast Pioneer to AI Skeptic — The Vision Behind Bridge Technologies

Design Philosophy: Reliability, Clarity, and Usability

P.G.: All right, let’s strip everything back for a moment. What’s your design philosophy? Do you lean toward elegance, efficiency, empowerment — or something more poetic? How do you see the world through what you build?

S.F.: There are a few fundamentals you simply cannot ignore. First, it has to work. That is non-negotiable. Technology means nothing if it is not one hundred percent reliable. Not 99.999. In live broadcast — whether it is the playoffs, a major final, or the Super Bowl — it simply has to work. That is the baseline.

The second question is who you are designing for. For us, the answer is simple: operators. If the tools are not intuitive, if people need manuals or months of training, then you have already failed, because that directly affects both efficiency and cost. And cost reduction, in the right sense, is not about cutting capability. It is about enabling better quality. If users can understand complex systems intuitively, they can focus on what actually matters. That is where the real gain is.

The challenge, of course, is how to achieve that, because simplification is extremely complex. You cannot dumb things down. That does not work. You have to present highly complex data in an immediately understandable way, without losing depth. That is what we spend most of our time on.

https://tkt1957.com/tft1957-tv-film-tech-magazine-special-edition-for-nab-show-2026